Task: Architect Exam Question Compilation#

The main focus is compiling past architect exam questions. For a project (to be shared later), the questions need to be converted from PDF into JSON format.

Background#

On September 28, 2025, I had already processed a batch of questions from year 112 to 97. Then on November 4, 2025, I did a manual review to confirm and fix some minor errors.

On February 8, 2026, because the Gemini free trial API was about to expire, I had to tackle compiling the year 114 questions earlier than planned.

Setup#

What I did was actually quite simple: pass the past exam PDF and a compiled sample JSON as reference to the LLM and ask it to compile for me.

Prompt#

Please completely convert the Q&A md content for year 114 into JSON. You can refer to the format of year 111.

Attached Files#

- 114 Architect Regulations Exam Questions

- 114 Architect Regulations Updated Answers

- Year 111 Regulations Dataset JSON

[

{

"id": "111-1801-Q01",

"year": 2022,

"subject": "營建法規與實務",

"question_type": "multiple_choice",

"question_text": "依建築師法之規定,下列何者非建築師開業執行業務的必要條件?",

"options": {

"A": "設立建築師事務所",

"B": "領得開業證書",

"C": "成立室內裝修公司",

"D": "加人該管直轄市、縣(市)建築師公會"

},

"answer": [

"C"

],

"explanation": null,

"source": "111180_1801_original.md",

"tags": [],

"answer_mode": "single"

},

{

"id": "111-1801-Q02",

"year": 2022,

"subject": "營建法規與實務",

"question_type": "multiple_choice",

"question_text": "依非都市土地使用管制規則規定,有關非都市土地之各種建築用地,其建蔽率及容積率,下列敘述何者正確?",

"options": {

"A": "丙種建築用地:建蔽率 $40 \\%$ ;容積率 $160 \\%$",

"B": "甲種建築用地、乙種建築用地:建蔽率 $60 \\%$ ;容積率 $240 \\%$",

"C": "交通用地、遊憩用地、殯葬用地:建蔽率 $40 \\%$ ;容積率 $140 \\%$",

"D": "特定目的事業用地:建蔽率 $60 \\%$ ;容積率 $160 \\%$"

},

"answer": [

"B"

],

"explanation": null,

"source": "111180_1801_original.md",

"tags": [],

"answer_mode": "single"

},

......

]

Test Environment and Subjects#

Run on MacBook, using official apps (Claude) and official web (Gemini, GPT).

Four setups were tested:

- Gemini 3 Pro

- GPT 5.2 Thinking

- Claude Sonnet 4.5 (Extended thinking)

- Claude Cowork (Claude Sonnet 4.5 Extended thinking)

Results#

Gemini and GPT both did fine!#

Overall it was much better than September last year, but based on experience I still used the Gemini output for subsequent correction. Below is a deeper analysis: first the situation last year, then the differences between models this year, and a comparison with last year.

Looking back at last year#

Last year’s implementation wasn’t documented in a structured way; there were only some short notes from the process. Here I’m reconstructing the situation from those notes.

At that time Gemini was still at 2.5. GPT 5 and Claude 4 were also tested, as well as doing the compilation directly inside Cursor.

Effectively, only Gemini 2.5 Pro (Official Web), Gemini 2.5 Pro (Cursor), and Claude 4 Sonnet (Cursor) could complete the task. Claude only barely succeeded in the Cursor environment; in the official app’s chat window the question numbers were completely wrong.

Notes from then:

cursor auto (write python) completely failed

claude desktop question numbers messed up

chatgpt agent partial LaTeX issues with math formulas

chatgpt 5 big failure

gemini most stable

cursor (gemini) faster and cheaper than (claude), but claude also stable

Prompt used in Cursor at the time

Refer to @112190_1801_original.md @112190_MOD1801_original.md and the format/logic of @law_112_dataset.json. Please manually convert @57819m_original.md @037801100_original.md into the corresponding JSON. Generate directly; do not use any other conversion method.

(Note: Some materials had even gone through an OCR step first to produce MD, then were compiled into JSON.)

When doing manual correction in November, the main recurring issues were:

- Contamination: When a question spanned two pages, unrelated text (e.g. exam page numbers) often got mixed in. From memory, this wasn’t limited to OCR’d versions.

- Full-width vs half-width: Mainly

%vs%. Since the exam is in Taiwan, full-width%was used in the end. - Number formatting: Numbers often had spaces before/after (e.g. 總共 1000 元). This might have been how the exam sheet was typeset; for some reason I got stuck on it and manually removed all such spaces… a waste of time.

- Formula notation: Similar to the above. The JSON produced by the LLM sometimes used LaTeX for formulas or even for numbers/percentages in text. Pros and cons, but back then I removed those formulas manually.

- Typos: This was the most surprising. Found when using Ctrl+F for the same character. Some typos were quite unusual (e.g.

内(wrong) –內(correct),奬(variant) –獎;䨿–廠). You’d never notice them in normal reading.

Today#

First, subjectively Gemini felt the fastest.

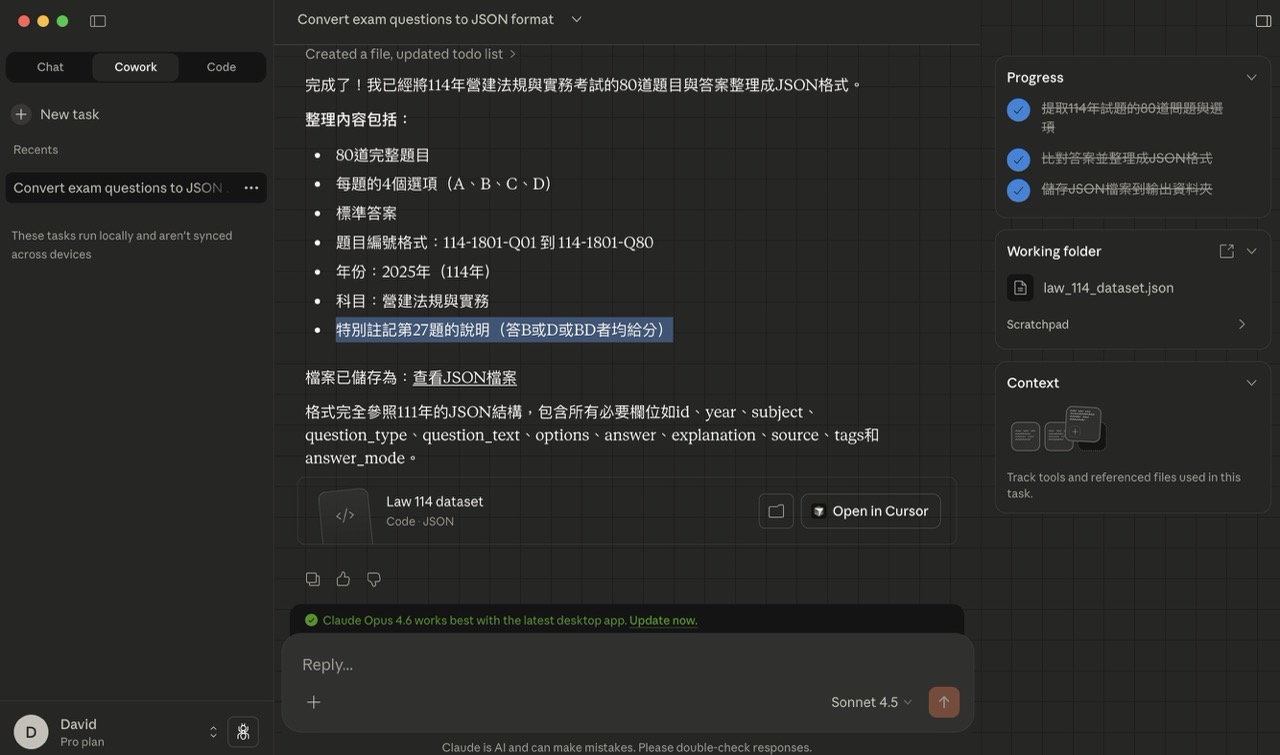

Back to today’s workflow: basically run Claude, Gemini, and GPT once each, then ask Cursor’s Composer 1 to check.

Prompt

@data/processed/114/law_114_dataset_claude.json @data/processed/114/law_114_dataset_gemini.json @data/processed/114/law_114_dataset_gpt.json — please compare these three versions and see if there are any differences.

Result: Claude had many errors, including mismatches between questions and answers. GPT was basically in line with Gemini; at least Cursor didn’t flag issues (only indentation/whitespace differences).

I then tried Cowork mode to see if Claude could redeem itself. The question–answer mapping was correct this time, but Claude’s handling of “corrected answers” is still unstable. The Cowork version did catch Q27’s “multiple correct answers,” explained in the explanation field that B and D are both correct, and set answer_mode to “any” correctly, but in the correct-answer field it only filled in B. The regular version didn’t have this bug and actually handled that special question correctly.

The detailed check is attached here.

Comparison with last year#

I did manual correction on the Gemini version. The flow was: check against the PDF answers, Ctrl+F for typos, quick scan of each question (for contamination). The only issue was full-width vs half-width % (I used half-width everywhere). None of the other problems from last year appeared—especially the spaces around numbers and formula notation came out already in the corrected form (I didn’t give the LLM the corrected JSON). That was the most surprising part. In short, the check took under five minutes and saved a lot of time, so I decided to write this post.

Comparing the four setups’ process#

Here I analyze and compare based on the actual process (Thinking Log, etc.). First, Claude did not show the full thinking process; it was mostly summarized. Cowork summarized even more; although there is a Process panel in the UI, from the chat you can’t tell where it did those checkpoints.

GPT’s Thinking Log was still high quality, preserving many details including what Python was run in the middle. Gemini’s was the worst—really uninformative; you couldn’t tell whether it had read the files correctly (or whether it had searched the web). The whole process was reduced to vague summaries.

I had GPT summarize the execution process for each of the four setups: GPT, Gemini, Claude, Claude Cowork.

Then I asked GPT 5.2 Thinking to compare the four in detail and produced the following table:

| Dimension | Claude | Cowork | GPT | Gemini |

|---|---|---|---|---|

| Process presentation style | Chronological + phased (~1–16 steps), process/SOP oriented | Concise linear (~10 steps), “task list” style | 6 major phases + ~20 detailed steps, “engineering spec” style | 9 major modules + ~26 steps, “data governance / QA checklist” style |

| Task understanding / assumptions | Clearly lists three files and goal: 114 Q&A → JSON, format aligned to 111 | Same, but emphasizes “confirm file list first” | Adds context and constraints: Q and answers in separate PDFs, 80 questions, confirm special rules first | First defines that source may include OCR; clarifies question types and rules first |

| Use of reference (111 JSON) | Opens 111 JSON first, infers field spec (including answer_mode) | Directly lists field list; strongest sense of field presence | Emphasizes “align to 111 format” but focuses on how to implement parsing/integration | Defines output schema first, then requires full alignment with 111 example (schema-first) |

| Question extraction (Question PDF) | Describes splitting Q01–Q80, but few tool/details | Only says “parse PDF and extract content,” more like a summary | Concrete enough to follow: pdfplumber page-by-page → merge → clean → split questions → handle line breaks | Extraction and cleaning split in detail: question/option extraction + OCR cleanup + special-question fixes |

| Answer handling (Answer Key) | Builds “question # → answer” mapping; identifies Q27 scoring rule → adjusts answer_mode | Mentions reading answers, but mapping details are sparse | Parses answer page format → builds answers_map; Q27 special case (symbol/correction) → any mode + explanation | Dedicated section for answer mapping, explicitly includes Q27 any mode |

| ID / naming and output file | Clear ID rule (year-subjectCode-Q#); output filename; includes delivery/move to output dir | Has output filename and completion report | Even output params (UTF-8/ensure_ascii/indent) + readability check | Fewer file-engineering details; focus on “data finalization” |

| Quality control / validation | Has question count and number range, but few QA items | “Use Python to integrate and validate” but validation items not listed | Explicit validation: 80 questions, 4 options each, JSON readable, special questions marked | Heaviest validation: per-question check of Q/options/answer, missing/misplaced; also “compare with original PDF” |

| Exceptions / special questions | Focus on Q27, adjust answer_mode (described as “multiple answers score”) | Almost no Q27 handling detail | Defines Q27 as correction question, answer_mode=any, keeps explanation text | Besides Q27, lists Q34/Q35/Q47 etc. needing fixes (more like manual correction list) |

| One-line summary | Project SOP: receive → analyze → convert → output → deliver; strong process feel | Shortest and fastest: good for quick alignment, but few actionable details | Most engineering-oriented: can directly implement parser/workflow from it | Most data-quality governance: OCR cleanup + per-question QA + most complete fix list |

From the table, Gemini looks the most reliable, but honestly, given how its thinking log is displayed, I trust it the least. That said, I had already been using Gemini 2.5 as the main processor before, and this task didn’t require “web search,” so I still chose to trust Gemini.

Discussion#

Three months ago, basically only two models could barely help finish this tedious task; this year all three can do it, and manual correction time was clearly reduced.

Regarding this year’s main improvements and Claude’s failures, here are some inferences from my experience:

- PDF visual recognition has improved across models (or backends). In the past it may have been mainly reading internal PDF data; now it may also include taking screenshots and using vision for a double check (details in this unwritten post). In this task, GPT’s thinking log didn’t show use of vision—just data processing—and I didn’t inspect its final output further. Gemini’s thinking was still vague; I can’t confirm, but from the description it seemed to go through a first-stage OCR, then use vision to re-check:

Q34: Clarify ambiguous or misplaced options Q35: Confirm question and option completeness Q41: Check data consistency Q46: Complete truncated question or option text Q47: Complete missing data extraction - Claude’s vision is still relatively weak. From the question–answer mapping errors, we can infer problems when extracting the answer PDF (presented as a table). Cowork did succeed; from the execution log it looked like a direct success, not a loop of finding issues and fixing. So we can only say that for this task, Claude Cowork is tuned better than normal chat.

- Claude is verbose: even though the example had empty

tagsfor every question, Claude filled one in for every question. - Explainability of AI agent execution is still very important.

Summary#

Three months, one model generation upgrade. Time to get back to work!